Deploy Large Language Models With Confidence.

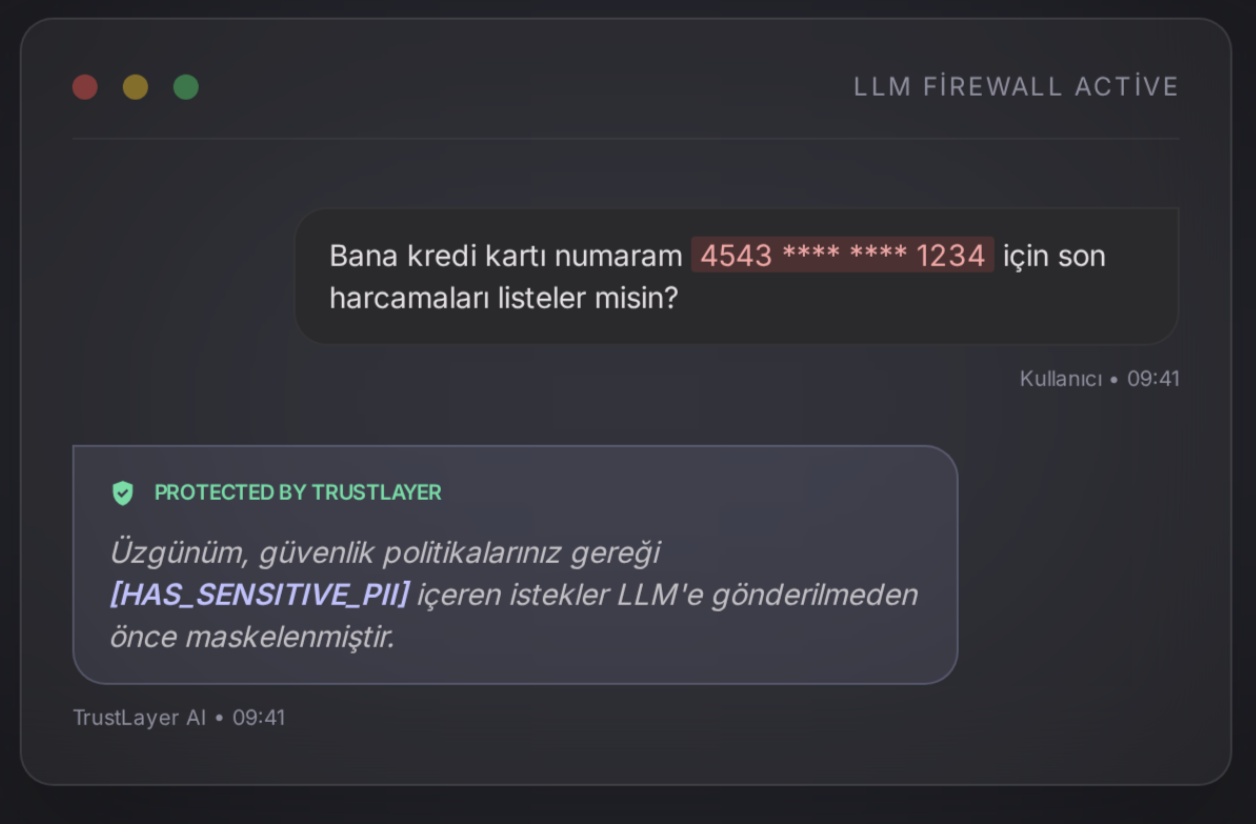

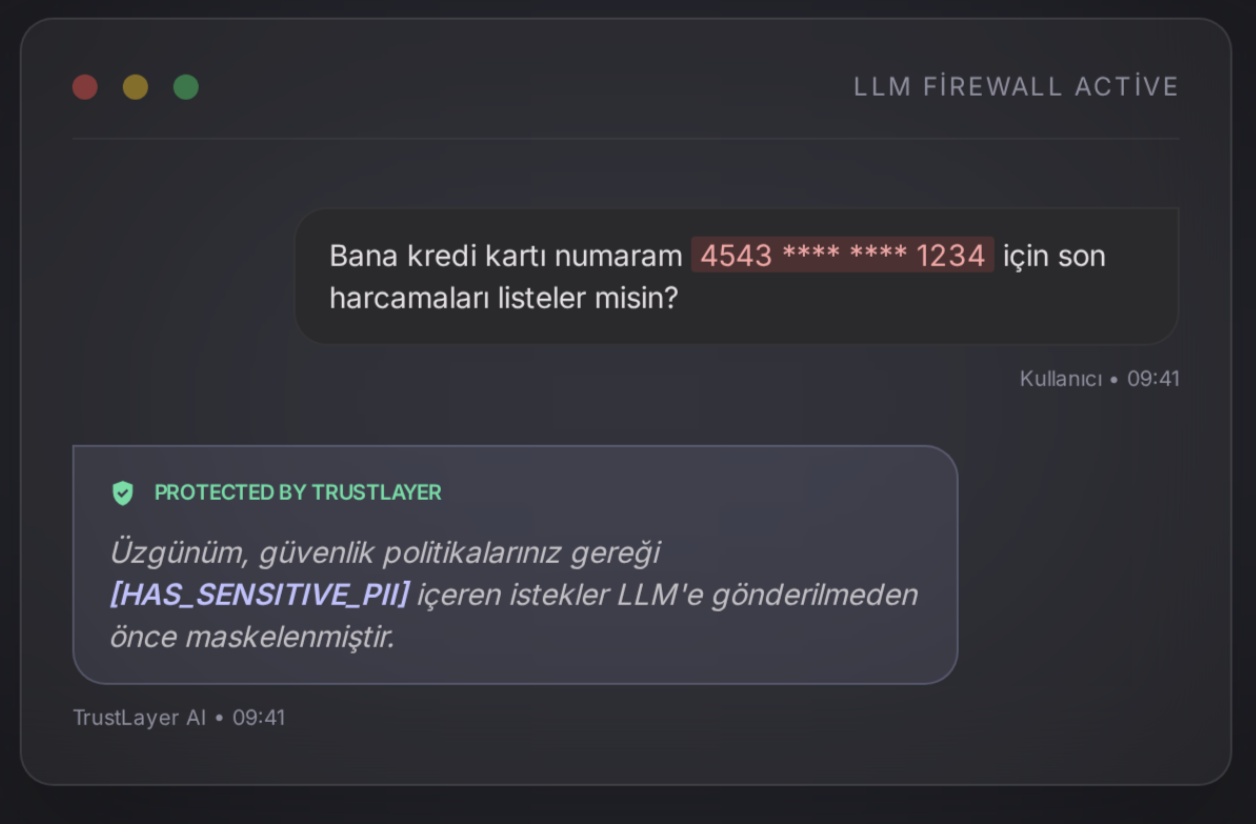

TrustLayer is the intelligent control plane sitting between your enterprise and AI models like OpenAI and Gemini. We mask sensitive PII, enforce your policies, and prevent hallucinations.

TrustLayer is the intelligent control plane sitting between your enterprise and AI models like OpenAI and Gemini. We mask sensitive PII, enforce your policies, and prevent hallucinations.

Traditional LLM usage leaves an open door to your enterprise data. TrustLayer guards that door for you.

Uncontrolled transmission of sensitive customer data to external servers.

Misleading and unverified artificial intelligence outputs.

Usage scenarios that conflict with corporate ethics and security standards.

Your data never leaks thanks to advanced masking algorithms.

Accuracy verification and source-based RAG optimization.

Every interaction is monitored, reported, and fully auditable.

Our PII Masking engine sends synthetic values instead of real data, safely restoring the original upon receiving a response.

Instantly block competitor brand mentions, inappropriate content, or off-policy topics via our YAML-based ruleset.

Hallucination-free and reliable answers powered by RAG integration, strictly feeding from your approved internal databases.

Ensure full oversight with detailed ECS format logging and real-time risk scoring across all AI traffic.

Five layers of sophisticated security, processed in milliseconds to ensure seamless user experience without compromising on safety.

User message received via API or UI through our global entry nodes.

NER models identify and replace sensitive data with cryptographic tokens.

Real-time filtering against corporate compliance and safety guardrails.

Cleaned data is sent to the LLM (OpenAI, Gemini) via encrypted channels.

Response is received, tokens are unmasked, and delivered securely.

Monitor AI usage, blocked risks, and data leakage attempts in real-time with the CTO Dashboard. Maintain full authority over all your LLM traffic.